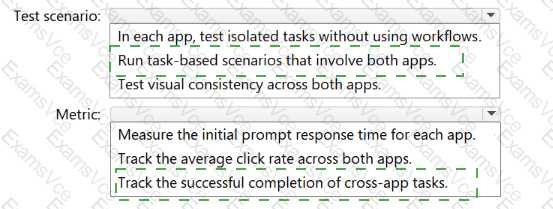

Test scenario → Run task-based scenarios that involve both apps; Metric → Track the successful completion of cross-app tasks

Comprehensive and Detailed Explanation From Agentic AI Business Solutions Topics:

The correct answer is D :

Test scenario → Run task-based scenarios that involve both apps

Metric → Track the successful completion of cross-app tasks

Why this test scenario is correct

The question explicitly asks for an end-to-end testing design for a Copilot Studio agent that integrates with both:

The required capabilities are:

coordinating tasks and data interactions across both apps

interpreting user input and returning contextually relevant outputs

That means the testing approach cannot be isolated to one app at a time. It must validate the agent’s behavior across the full multi-application business workflow.

That is why the correct test scenario is:

Run task-based scenarios that involve both apps

This kind of test validates whether the agent can successfully move through realistic business processes such as:

reading customer context from one app

updating or retrieving related sales information in the other

maintaining context through the workflow

responding appropriately based on user intent across systems

From an agentic AI business solutions perspective, this is the right design because true enterprise agent validation must focus on workflow execution , not just component-level checks.

Why this metric is correct

The best metric is:

Track the successful completion of cross-app tasks

This is the most direct way to measure whether the agent is actually achieving the intended business outcome across both Dynamics 365 applications.

Why this matters:

The requirement is about coordination across apps

The test is end-to-end

The goal is not just speed or UI consistency

The agent must complete business tasks successfully across systems

A cross-app completion metric shows whether the agent can:

understand the user’s request

maintain context

retrieve or update the right information

finish the workflow correctly across app boundaries

This is much more meaningful than measuring clicks or simple response time.

Why the other options are incorrect

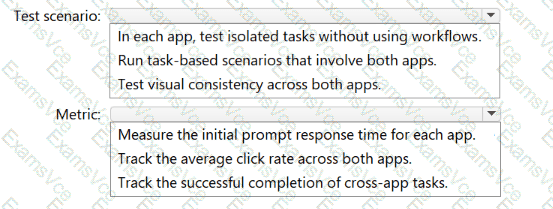

A. In each app, test isolated tasks without using workflows / Measure initial prompt response time

This fails the end-to-end requirement. Isolated tasks do not validate cross-app orchestration, and response time does not prove successful workflow execution.

B. Run task-based scenarios that involve both apps / Track average click rate across both apps

The scenario part is good, but average click rate is not the right success metric for Copilot task orchestration. Clicks do not reliably measure whether the business process was completed correctly.

C. Test visual consistency across both apps / Track successful completion of cross-app tasks

The metric is good, but the test scenario is wrong. Visual consistency is a UI concern, not an end-to-end functional validation of cross-app agent behavior.

Expert reasoning

For exam questions like this:

If the requirement says end-to-end across multiple apps , choose task-based scenarios involving both apps

If the goal is business workflow success, choose a metric tied to task completion , not visual design, click rate, or raw response speed