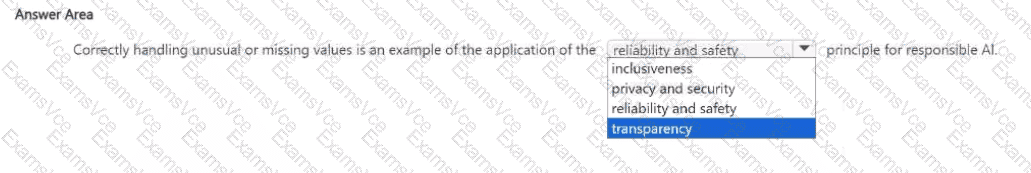

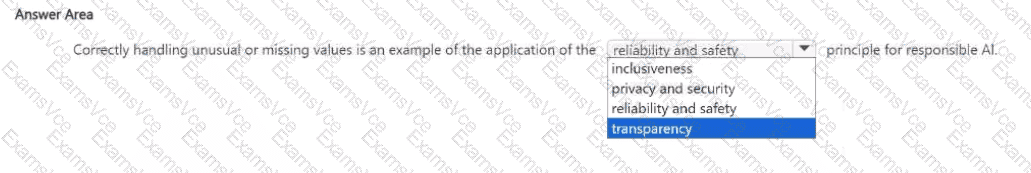

In Microsoft’s Responsible AI framework, the Reliability and Safety principle ensures that AI systems perform consistently, safely, and as intended across diverse conditions — even when faced with incomplete, unusual, or unexpected data. Correctly handling unusual or missing values in a dataset directly demonstrates this principle, as it helps prevent faulty predictions, biased results, or unsafe system behaviors.

According to the Microsoft Learn Responsible AI module (from the AI-900 and AI-102 study paths), a reliable AI model should maintain its performance when encountering data anomalies. This includes validating inputs, managing missing or extreme values, and testing models to ensure they behave as expected in real-world scenarios. Such practices make AI systems robust and trustworthy, which aligns exactly with the Reliability and Safety principle.

The other Responsible AI principles address different concerns:

Inclusiveness: Ensures AI empowers and serves all users equitably.

Privacy and Security: Focuses on safeguarding personal data and preventing unauthorized access.

Transparency: Ensures that AI decisions are understandable and explainable to users.

While all principles are essential, managing data integrity and system stability—including how a model responds to missing or anomalous values—is primarily a matter of reliability and safety. It ensures the AI behaves predictably and minimizes risks of errors or unintended harm.

Therefore, the correct completion of the sentence is:

“Correctly handling unusual or missing values is an example of the application of the Reliability and Safety principle for Responsible AI.”