In the Microsoft Azure AI Fundamentals (AI-900) and Azure Machine Learning (AML) learning paths, deploying a real-time inference pipeline refers to making a trained machine learning model available as a web service that can process incoming data and return predictions instantly. To achieve this, the model must be deployed to an infrastructure capable of handling continuous, low-latency requests with high reliability and scalability.

Microsoft’s official guidance from Azure Machine Learning documentation specifies that:

For testing or development, you can deploy to Azure Container Instances (ACI) because it provides a lightweight, temporary environment suitable for small-scale or non-production workloads.

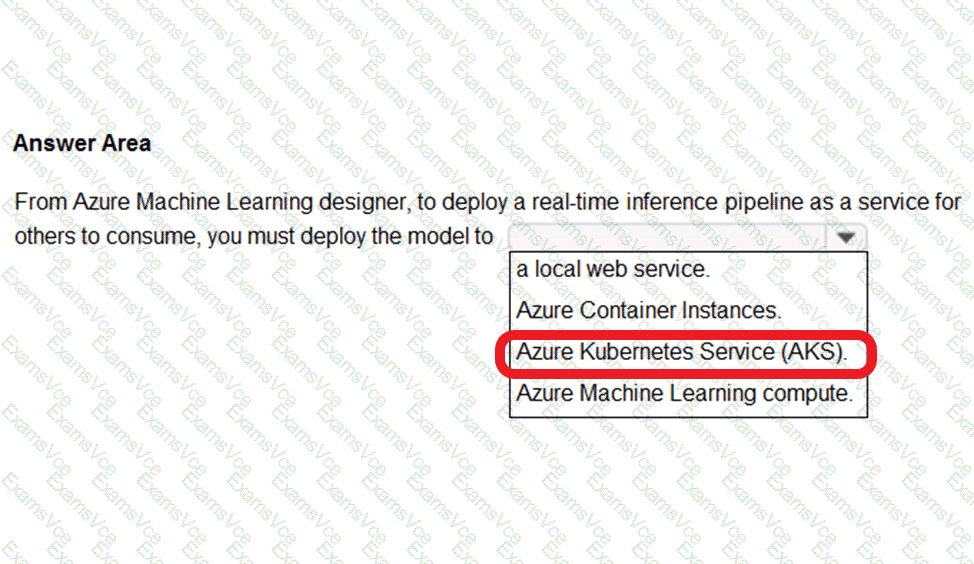

For production-grade, real-time inference, the deployment should be made to Azure Kubernetes Service (AKS).

AKS provides enterprise-level scalability, load balancing, and high availability, which are critical for serving real-time predictions to multiple consumers simultaneously. It manages containerized applications using Kubernetes orchestration, allowing the model to scale automatically based on traffic demands.

Azure Machine Learning Compute is mainly used for model training and batch inference pipelines, not real-time endpoints. A local web service is typically used only for debugging or offline testing on a developer machine and cannot be shared for external consumption.

Therefore, when deploying a real-time inference pipeline as a service for others to consume, the correct and Microsoft-verified option is Azure Kubernetes Service (AKS). This environment ensures production readiness, secure endpoint management, and scalability for live AI applications, fully aligning with best practices outlined in the Azure Machine Learning designer documentation and AI-900 exam objectives.

https://docs.microsoft.com/en-us/azure/machine-learning/concept-designer#deploy